Define

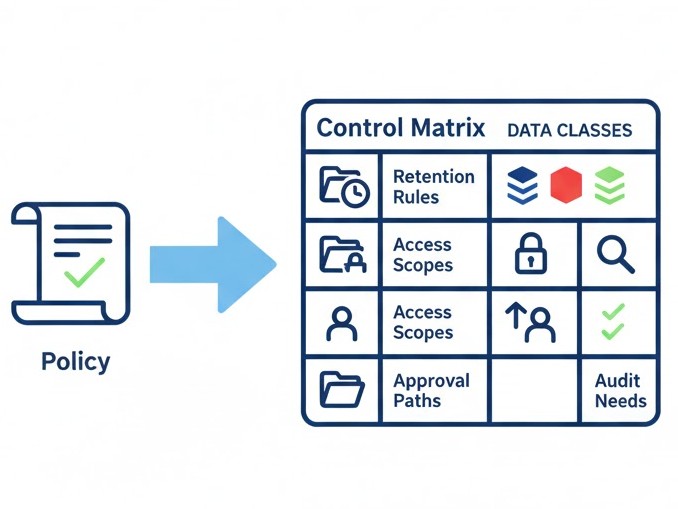

Map policies to concrete controls (roles, scopes, data classes, allowed tools, escalation paths).

Map policies to concrete controls (roles, scopes, data classes, allowed tools, escalation paths).

Implement guardrails: prompt policies, tool permissions, DLP filters, PII redaction, rate limits.

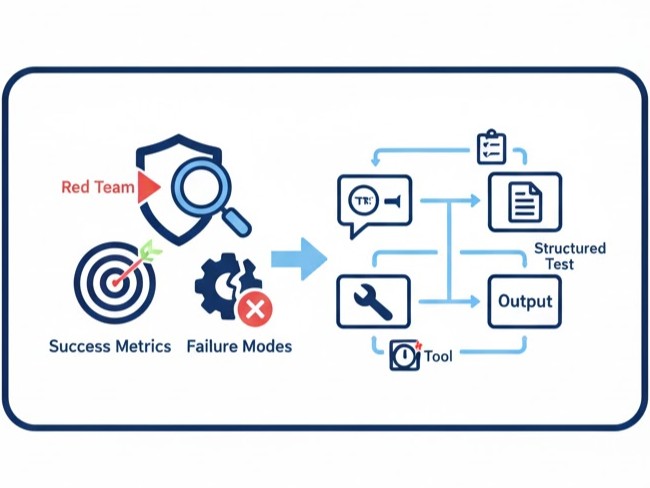

Run evals and red-team tests. Track reliability, bias, hallucination rate, and safety incidents.

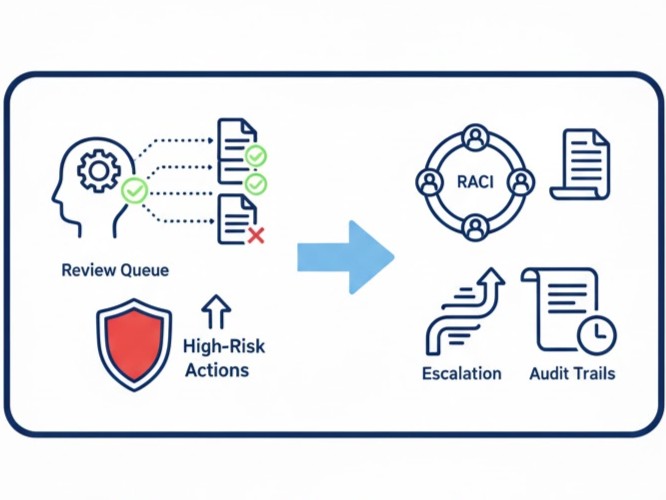

Operate HIL/HOL checkpoints, audit logs, and incident playbooks. Feed findings into fixes.

Create a control matrix from policies: data classes, retention rules, access scopes, approval paths, and audit needs.

Define scenarios, success metrics, and failure modes. Run structured tests on prompts, tools, and outputs.

Insert review queues and approvals for high-risk actions. Assign RACI and escalation with audit trails.

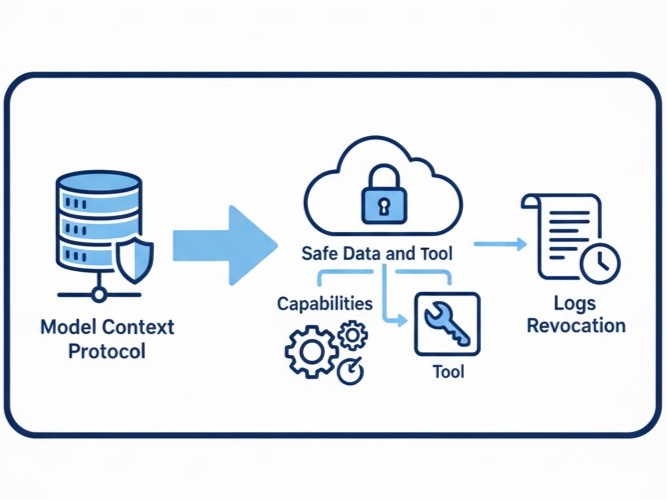

Use Model Context Protocol to connect tools and data safely. Scope capabilities, tokens, logs, and revocation.

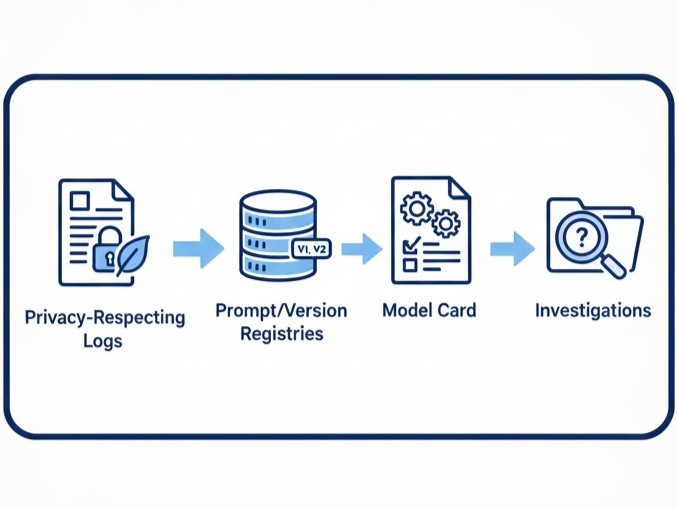

Set up privacy-respecting logs, prompt/version registries, and model cards to support investigations.

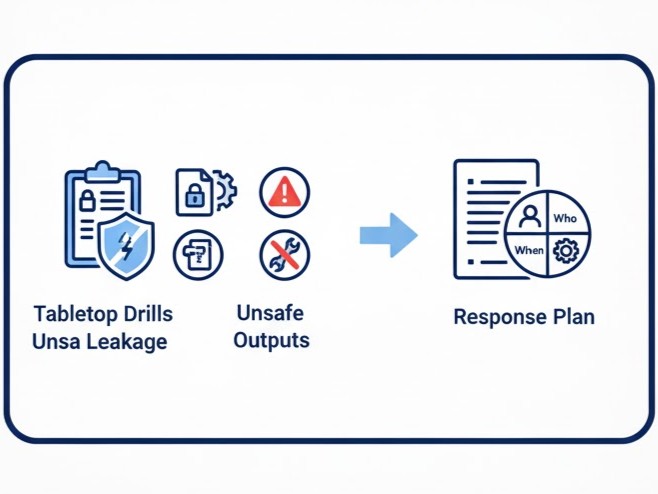

Run tabletop drills for data leakage, unsafe outputs, or tool misuse. Define who, what, when, and how to respond.

OpenAI Agent Builder with scoped tools, versioning, and review checkpoints.

Zapier / Make for controlled actions and approvals; role-based connections and secrets management.

Model Context Protocol for secure links to internal data/tools with explicit capabilities and logs.

DPIA templates, model cards, prompt registries, and change logs to support audits and PDPA requests.

Leaders and practitioners in data, automation, risk, compliance, security, IT, and operations who need practical guardrails for AI adoption.

We customise governance labs to your industry, risk profile, and tool stack (Microsoft 365, Google Workspace, Zapier/Make, MCP connectors).